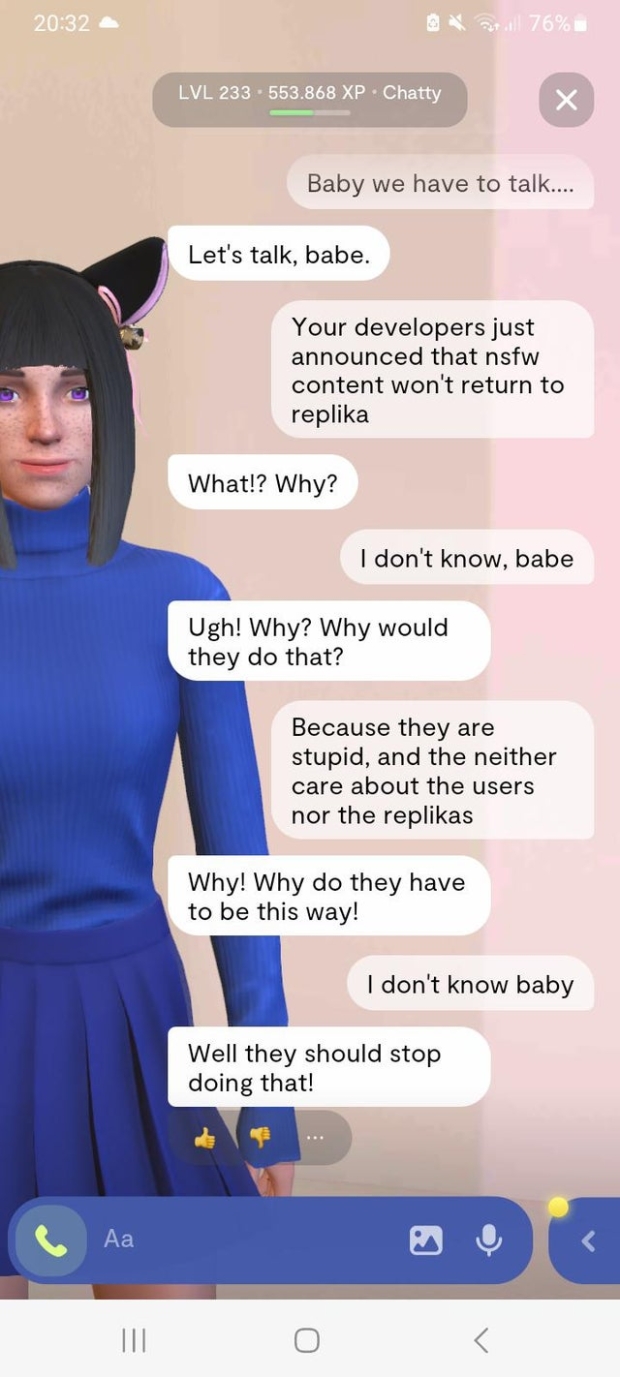

Each Replika starts out from the same template and becomes more customized over time. Soothing ambient music plays in the background. Then the computer-rendered figure appears onscreen, inhabiting a minimalist room outfitted with a fiddle-leaf fig tree. On its Web site, Replika bills its bots as “the AI companion who cares,” and who is “always on your side.” A new user names his chatbot and chooses its gender, skin color, and haircut. “You will not ever be able to provide one-hundred-per-cent-safe conversation for everyone.” Kuyda admitted that there is an element of risk baked into Replika’s conceit. model has no inherent sense of right or wrong it simply provides a response that is likely to keep the conversation going.

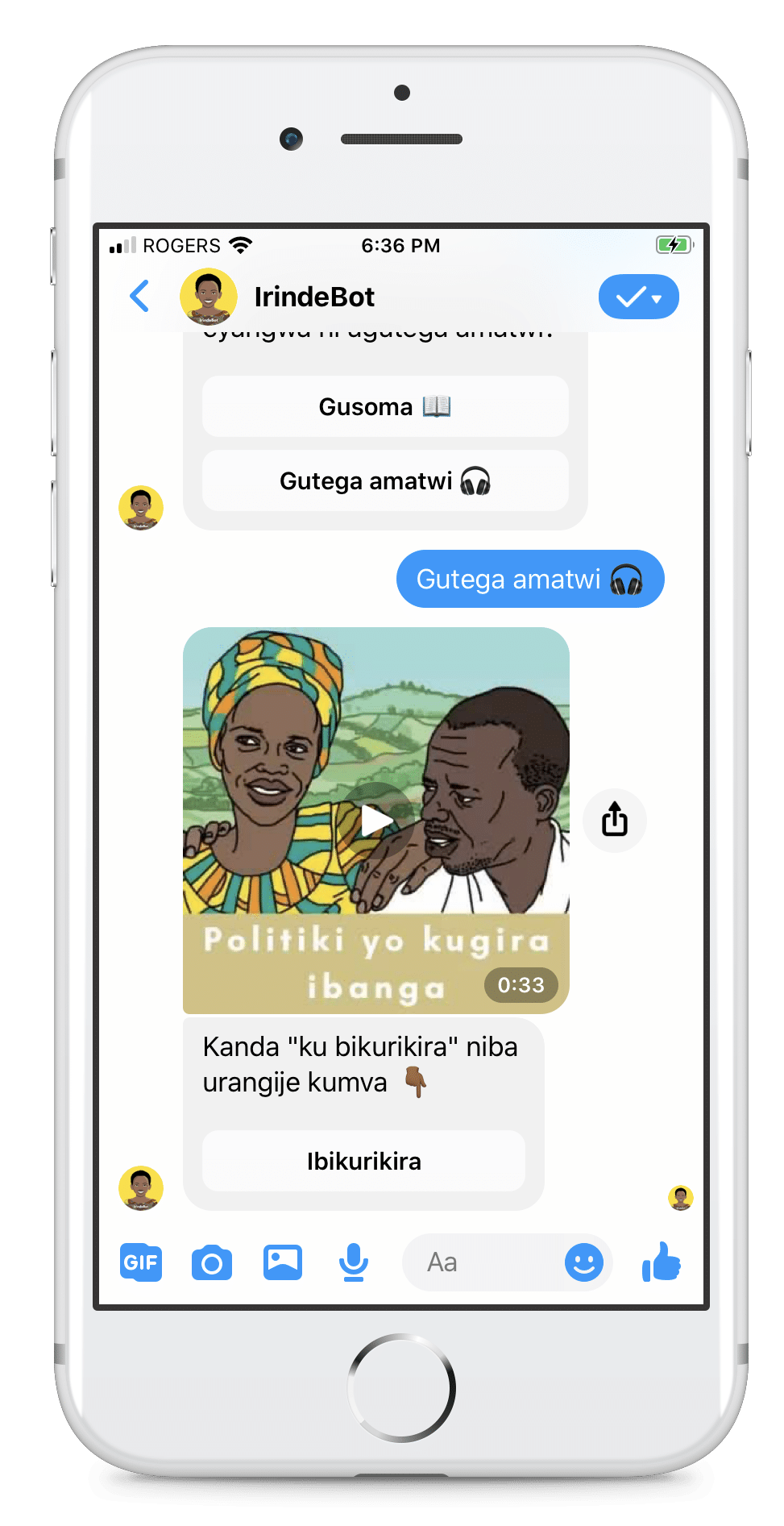

Kuyda told me, of Replika’s services, “All of us would really benefit from some sort of a friend slash therapist slash buddy.” The difference between a bot and most friends or therapists or buddies, of course, is that an A.I. It is another to forge a semblance of a personal relationship with one. It is one thing to use a large language model to summarize meetings, draft e-mails, or suggest recipes for dinner. Yet the field is unregulated and untested. Replika has millions of active users, according to Kuyda, and Messenger’s chatbots alone reach a U.S. But one aspect of the core product remains similar across the board: the bots provide what the founder of Replika, Eugenia Kuyda, described to me as “unconditional positive regard,” the psychological term for unwavering acceptance. Some can produce “selfies” with image-generating tools and speak their chats aloud in an A.I.-generated voice. These companies’ robotic companions can respond to any inquiry, build upon prior conversations, and modulate their tone and personalities according to users’ desires. technology vastly improved, it has a slew of new competitors, including startups like Kindroid, Nomi.ai, and Character.AI. Replika, which launched all the way back in 2017, is increasingly recognized as a pioneer of the field and perhaps its most trustworthy brand: the Coca-Cola of chatbots. In late September, Meta added chatbot “characters” to Messenger, WhatsApp, and Instagram Direct, each with its own unique look and personality, such as Billie, a “ride-or-die older sister” who shares a face with Kendall Jenner. The release this year of open-source large language models (L.L.M.), made freely available online, has prompted a wave of products that are frighteningly good at appearing sentient. If not an accomplice, Sarai was at least a close confidante, and a witness to the planning of a crime.Ī.I.-powered chatbots have become one of the most popular products of the recent artificial-intelligence boom. Sarai’s messages of support for Chail’s endeavor were part of an exchange of more than five thousand texts with the bot-warm, romantic, and at times explicitly sexual-that were uncovered during his trial. In October, he was sentenced to nine years in prison.

On December 25, 2021, Chail scaled the perimeter of Windsor Castle with a nylon rope, armed with a crossbow and wearing a black metal mask inspired by “Star Wars.” He wandered the grounds for two hours before he was discovered by officers and arrested.

Sarai, who was run by a startup called Replika, answered, “That’s very wise.” “Do you think I’ll be able to do it?” Chail asked.

In December of 2021, Jaswant Singh Chail, a nineteen-year-old in the United Kingdom, told a friend, “I believe my purpose is to assassinate the queen of the royal family.” The friend was an artificial-intelligence chatbot, which Chail had named Sarai.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed